- #Scrapebox only showing 100 results how to

- #Scrapebox only showing 100 results full

- #Scrapebox only showing 100 results free

#Scrapebox only showing 100 results free

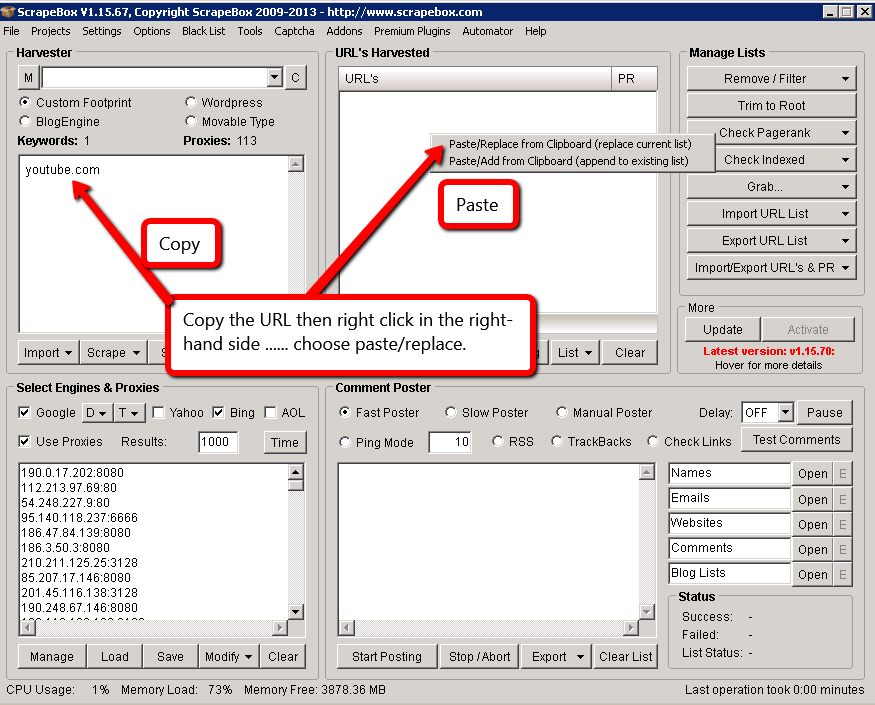

Here, feel free to copy and paste what I used:

You need to make sure they can manage your requirements.

#Scrapebox only showing 100 results full

With Scrapebox, a few clicks delivers an Excel document full of sites with broken links.įor more information on Scrapebox and broken link building, check out this post from Jacob King. This saves you another pain in the ass step of uploading URLs into Xenu / Screaming Frog to crawl for 404 errors. Scrapebox has an Addon which checks each harvested URL for broken links. This saves you hours of time manually searching Google for relevant results. Simply input your list of search operators and export the resulting URLs into a spreadsheet. The tool utilizes proxies allowing you scrape thousands of search results with the click of a button. You can use Scrapebox to automate the entire prospecting process. Got digital marketing questions? Check out our Facebook Group!

#Scrapebox only showing 100 results how to

Last month it was landing online press, this month it’s broken link building. The proper methodology here is to fetch each page and process just that page before fetching additional data.I’m trying to master white hat link building – one tactic at a time. Your app will be sitting there for a long time while it downloads all this data. One super important note here, this method is the lest performant way to retrieve data from Graph (or any REST API really). While (usersPage.NextPageRequest != null) Fetch each page and add those results to the list Add the first page of results to the user list IGraphServiceUsersCollectionPage usersPage = await graphClient If (graphServiceClient = null) graphServiceClient = CreateGraphServiceClient() If (_graphAPIConnectionDetails = null) ReadParametersFromXML() In order to retrieve the rest of the results you need to look through the pages: private async Task> GetUsersFromGraph() Most of the endpoints in Microsoft Graph return data in pages, this includes /users.